CPanel Webserver on EC2 (or How I learned to stop worrying and love the cloud )

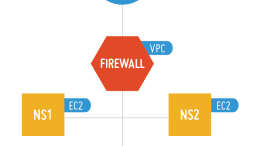

It’s been a long time coming but my company is now fully based on Amazon Web Services EC2 for our web hosting. It’s been a long journey to get here. For more than 15 years we’ve cycled…